AI开发平台MODELARTS-如何在代码中打印GPU使用信息:使用python命令

时间:2024-11-06 21:52:48

使用python命令

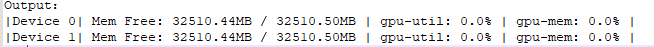

- 执行nvidia-ml-py3命令(常用)。

!pip install nvidia-ml-py3

import nvidia_smi nvidia_smi.nvmlInit() deviceCount = nvidia_smi.nvmlDeviceGetCount() for i in range(deviceCount): handle = nvidia_smi.nvmlDeviceGetHandleByIndex(i) util = nvidia_smi.nvmlDeviceGetUtilizationRates(handle) mem = nvidia_smi.nvmlDeviceGetMemoryInfo(handle) print(f"|Device {i}| Mem Free: {mem.free/1024**2:5.2f}MB / {mem.total/1024**2:5.2f}MB | gpu-util: {util.gpu:3.1%} | gpu-mem: {util.memory:3.1%} |")

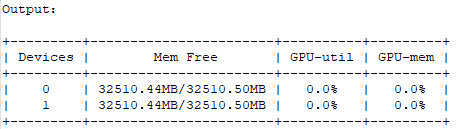

- 执行nvidia_smi + wapper + prettytable命令。

用户可以将GPU信息显示操作看作一个装饰器,在模型训练过程中就可以实时的显示GPU状态信息。

def gputil_decorator(func): def wrapper(*args, **kwargs): import nvidia_smi import prettytable as pt try: table = pt.PrettyTable(['Devices','Mem Free','GPU-util','GPU-mem']) nvidia_smi.nvmlInit() deviceCount = nvidia_smi.nvmlDeviceGetCount() for i in range(deviceCount): handle = nvidia_smi.nvmlDeviceGetHandleByIndex(i) res = nvidia_smi.nvmlDeviceGetUtilizationRates(handle) mem = nvidia_smi.nvmlDeviceGetMemoryInfo(handle) table.add_row([i, f"{mem.free/1024**2:5.2f}MB/{mem.total/1024**2:5.2f}MB", f"{res.gpu:3.1%}", f"{res.memory:3.1%}"]) except nvidia_smi.NVMLError as error: print(error) print(table) return func(*args, **kwargs) return wrapper

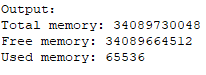

- 执行pynvml命令。

nvidia-ml-py3可以直接查询nvml c-lib库,而无需通过nvidia-smi。因此,这个模块比nvidia-smi周围的包装器快得多。

from pynvml import * nvmlInit() handle = nvmlDeviceGetHandleByIndex(0) info = nvmlDeviceGetMemoryInfo(handle) print("Total memory:", info.total) print("Free memory:", info.free) print("Used memory:", info.used)

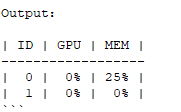

- 执行gputil命令。

!pip install gputil

import GPUtil as GPU GPU.showUtilization()

import GPUtil as GPU GPUs = GPU.getGPUs() for gpu in GPUs: print("GPU RAM Free: {0:.0f}MB | Used: {1:.0f}MB | Util {2:3.0f}% | Total {3:.0f}MB".format(gpu.memoryFree, gpu.memoryUsed, gpu.memoryUtil*100, gpu.memoryTotal))

注:用户在使用pytorch/tensorflow等深度学习框架时也可以使用框架自带的api进行查询。

support.huaweicloud.com/modelarts_faq/modelarts_05_0374.html

看了此文的人还看了

CDN加速

GaussDB

文字转换成语音

免费的服务器

如何创建网站

域名网站购买

私有云桌面

云主机哪个好

域名怎么备案

手机云电脑

SSL证书申请

云点播服务器

免费OCR是什么

电脑云桌面

域名备案怎么弄

语音转文字

文字图片识别

云桌面是什么

网址安全检测

网站建设搭建

国外CDN加速

SSL免费证书申请

短信批量发送

图片OCR识别

云数据库MySQL

个人域名购买

录音转文字

扫描图片识别文字

OCR图片识别

行驶证识别

虚拟电话号码

电话呼叫中心软件

怎么制作一个网站

Email注册网站

华为VNC

图像文字识别

企业网站制作

个人网站搭建

华为云计算

免费租用云托管

云桌面云服务器

ocr文字识别免费版

HTTPS证书申请

图片文字识别转换

国外域名注册商

使用免费虚拟主机

云电脑主机多少钱

鲲鹏云手机

短信验证码平台

OCR图片文字识别

SSL证书是什么

申请企业邮箱步骤

免费的企业用邮箱

云免流搭建教程

域名价格

推荐文章

下载AI开发平台MODELARTS用户手册完整版

下载AI开发平台MODELARTS用户手册完整版